Today I’m going to address one of the most fundamental questions in neuroscience: how do our brains learn?

People often compare the brain to a computer: both are able to process and store tons of information. But computers (generally) don’t learn. Your computer doesn’t mature over time or change its programming based on what worked and what didn’t. That’s why its same annoying habits or bugs will continue to annoy you until you upgrade its software or smash it with a baseball bat.

We, on the other hand, learn new things every day. Anything you can remember is something you’ve learned, whether it’s what you had for breakfast this morning or the name of your third grade teacher or how to solve differential equations. Many memories are subconscious, like the aversion you feel toward the smell of tequila, or the joy that overcomes you when you see a dear old friend for the first time in years.

Some memories are ephemeral, lasting minutes or hours; others may last a lifetime. PTSD, for example, is an unfortunate, extreme form of learning that is very hard to forget.

Learning, at its very core, is when prior experience alters your future behavior. The experience could be factual, like hearing someone introduce himself on a first date, or it could be emotional, like enduring the crappiest first date ever. Both experiences change your future behavior. Now when you see this guy on the street, you’ll behave differently than the first time: you’ll address him by his name, and you’ll try to get the hell out of there before he asks you out again.

Theories of learning

So how does this work? How does experience change your brain to alter your future behavior?

Back in the early to mid-20th century, scientists had a lot of different ideas about how the brain learns. Some people thought that learning altered the electrical potential or chemical gradients across the brain. Others hypothesized that memories are stored in dynamic interactions between many brain cells, called neurons, or required the birth of new neurons. Still others suggested that learning occurred at a sub-cellular, molecular level by changing the composition of DNA or RNA, which all cells contain.1

None of these theories had much evidence going for them, and they weren’t easy to test. In order to study how the brain learns, first you need to focus on a particular example of learning and figure out which neurons are involved. Then you need to return to the same neurons before and after learning to see how they’ve changed. Even today, this is very difficult to do in the mammalian brain, where there are thousands of neurons in every brain region that all pretty much look the same.

photo of Aplysia (credit: G. Anderson via Wikimedia Commons)

In the 1960s, an intrepid neuroscientist named Eric Kandel decided to tackle the question of learning by studying a lowly sea slug called Aplysia. You can imagine the jeering he received from other scientists who believed that invertebrates were far too different from humans to teach us anything useful. As Kandel relates, “Few self-respecting neurophysiologists, I was told, would leave the study of learning in mammals to work on an invertebrate.”

But Aplysia offered several advantages. These slugs have a relatively small number of neurons, making it simpler to figure out which ones are doing what. The neurons are large, making them easier to study, and many can be uniquely identified in each slug. So once Kandel figured out which neurons to focus on, he could recognize them in different animals as well as return to them multiple times in the same animal to see how they change during learning.

Learning in sea slugs

So Kandel had a great animal in which to study how the brain learns. Now the question was, can slugs actually learn anything?

Fortunately, Kandel and his colleagues discovered some very simple forms of learning in Aplysia. Aplysia has a respiratory organ called a gill, which normally sticks out into the water. But when you touch the gill, it rapidly retracts into the body cavity for protection. This is not learning—it’s just a defensive reflex. But the reflex can be modified by learning.

One form of learning, called habituation, means that if you repeatedly touch the gill the slug stops retracting it. It’s like when your kid keeps incessantly calling for you for no reason—you pay attention the first time, but if he doesn’t actually need anything you start ignoring him. (Yes, I’m thinking of this clip.)

Another form of learning is called sensitization. This is the opposite of habituation: you show a greater response than normal because something has alerted you to pay extra attention. In Aplysia, this happens when you give a shock to a different part of its body, such as its tail. The shock causes the slug to enhance its gill withdrawal reflex because it senses that there might be something dangerous around.

Cartoon of Aplysia’s gill withdrawal reflex. Normally the gill is extended (left), but touching it causes it to retract (center). Sensitization induced by tail shock enhances this response, causing the gill to retract even further (right). Habituation has the opposite effect and decreases the retraction response. (from Kandel, 2001)

Dissecting learning, cell by cell

To understand how Aplysia learns to modify its gill withdrawal reflex, Kandel and his colleagues first needed to figure out which neurons were involved in this behavior. They identified the sensory neurons that sense touch on the gill and the motor neurons that control gill withdrawal. The sensory and motor neurons turned out to be directly connected.

Let me briefly digress to explain what we mean when we say these neurons are “connected”. Neurons communicate with each other at structures called synapses. At synapses, one neuron (the presynaptic cell) releases chemicals that can either activate or suppress a second neuron (the postsynaptic cell). This is how information is transmitted in the brain.

Cartoon of neurons connected by synapses. (credit: Simple Biology, modified)

So in this case, the sensory and motor neurons controlling gill withdrawal are connected by a synapse, through which the sensory neurons tell the motor neurons that something’s touching the gill. The motor neurons then cause gill withdrawal. This is pretty much the simplest brain circuit you can find, and means that any learning-related changes in the reflex must be occurring somewhere in these few neurons.

Kandel and his colleagues found that these neurons weren’t changing who they were connected to or how they were functioning in general. Certainly no new neurons were being born, and there weren’t any large-scale changes across neuronal populations.

Data showing how sensitization works. The curves represent electrical signals from neurons, with upward deflections signifying activation. Sensitization induced by tail shock doesn’t change the response of the sensory neuron, but it increases the response of the motor neuron, which enhances gill withdrawal. (from Kandel Nobel Lecture)

Instead, learning-related changes were specifically localized to the synapse between the sensory and motor neurons, altering how strongly information was being transmitted.

During habituation, the synapse is weakened. The sensory neurons are still activated by touch, but they don’t transmit that information to the motor neurons as strongly. So the activation of the motor neurons, and consequently the withdrawal reflex, is weaker than usual.

During sensitization, the synapse is strengthened. Even mild activation of the sensory neurons strongly excites the motor neurons, which induce a strong withdrawal reflex.2

It’s all about the connections

With these experiments, Kandel had discovered the basis for how our brains learn: by changing the strength of synapses to adjust how loudly neurons talk to each other. Our brains turn up the volume for connections that are important, and turn down or even mute connections aren’t helpful.

This is what’s happening every time you form a new memory. Like if you try a new type of candy that ends up being delicious, your brain strengthens the connections between the neurons that perceive the image of the candy and those that make you want to eat it. Or if you get stuck between stations on the A train for half an hour, your brain weakens the connections between the neurons that store your memory of the A train and those that make you want to ride it.

The actual mechanism of HOW synapses are strengthened or weakened is an incredibly complex process, but it basically it involves changing the number or type of molecules that make up the synapse. The key change is that the postsynaptic cell adjusts the number of receptors that it uses to listen to what the presynaptic cell is saying. Having more receptors is like turning up the volume, and vice versa.

Cartoon showing how learning can strengthen a synapse by increasing the number of receptors in the postsynaptic cell that respond to chemicals from the presynaptic cell. (credit: T. Sulcer via Wikimedia Commons, modified)

The specific molecular changes that are implemented determine whether it’s a short-term or long-term memory. Super long-term memories also involve structural changes on a larger scale: entirely new synapses can grow or existing synapses can be destroyed.

Brain versus machine

Kandel in 2013 (credit: B. Oberger via Wikimedia Commons)

The work of Kandel and colleagues laid the foundation for our understanding of how the brain learns, for which they shared the Nobel Prize in 2000. We now know that the basic unit of learning isn’t a brain region or a molecule or even a neuron: it’s the synapse.3 This is true all the way from slugs and bugs to mice and humans.

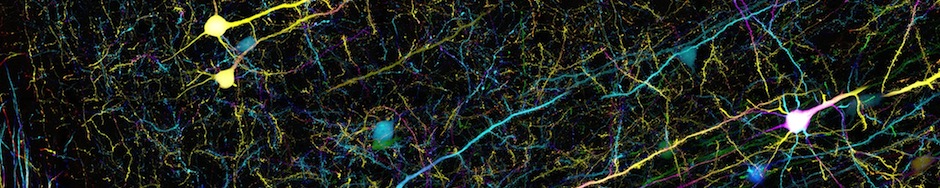

Since each neuron makes many synapses (a thousand on average in the human brain), individual brain cells can be involved in storing many different kinds of memories through different connections. And your brain contains about 100 trillion synapses! Each one of these minuscule structures is capable of being modified or removed or regrown, bestowing us with an incredible capacity for learning.

That’s what makes your brain different from a machine. It’s constantly changing, its tiny pieces continually growing and retracting, strengthening and weakening, making it possible for you to learn from your experiences, adapt to new situations, and store a lifetime of memories.

Notes:

1. These old ideas are described in Kandel’s Nobel Lecture and Nobel Prize Biography, which also tell the whole story of how he came to make his breakthrough discoveries.

2. Here are some of the original papers in which Kandel and colleagues reported their findings:

Kupfermann I, Castellucci V, Pinsker H, Kandel E. Neuronal correlates of habituation and dishabituation of the gill-withdrawal reflex in Aplysia. Science 167:1743-1745 (1970).

Castellucci V, Pinsker H, Kupfermann I, Kandel ER. Neuronal mechanisms of habituation and dishabituation of the gill-withdrawal reflex in Aplysia. Science 167:1745-1748 (1970).

3. Of course, there are exceptions to every rule; some forms of learning and memory don’t involve changes at the synapse. For example, working memory, which is when you store information for short periods of time while performing a task, is thought to be mediated by dynamic activity within neuronal networks.

I am looking to find the most simplistic explanation about synapses to explain to young children.